AIの未来を切り開く|TEDで語られたChatGPTの可能性

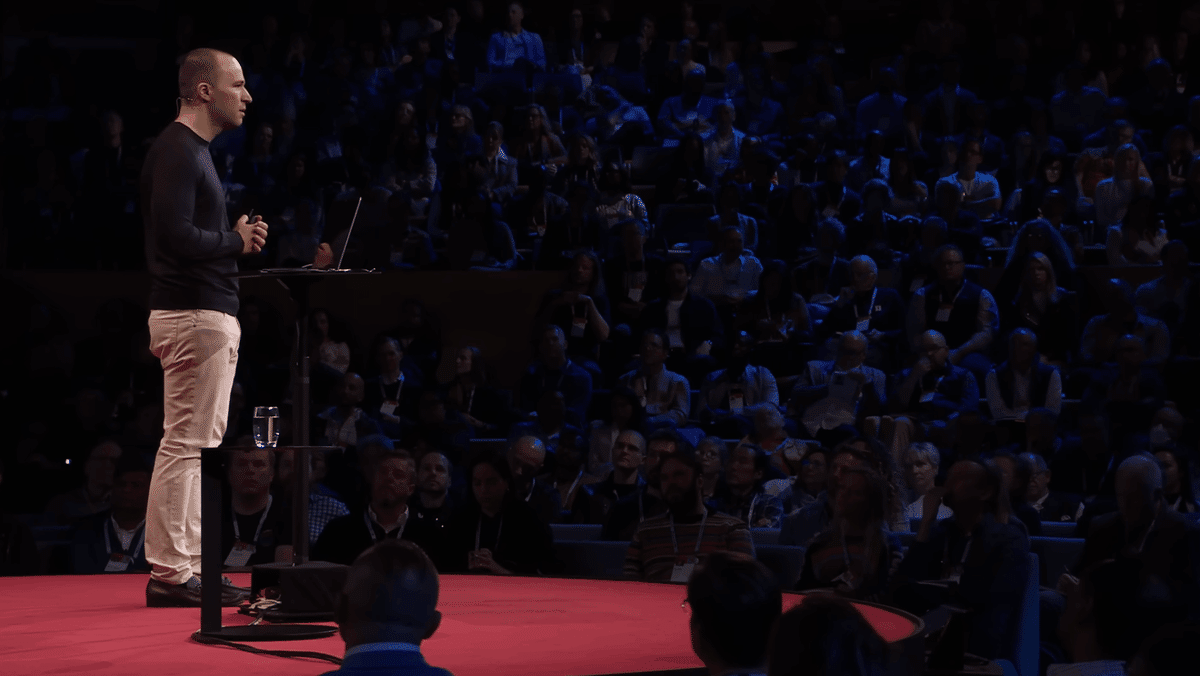

この記事では、2023年4月21日に公開されたTEDトーク「The Inside Story of ChatGPT’s Astonishing Potential | Greg Brockman | TED (ChatGPTの驚異的な可能性の内側の物語 | グレッグ・ブロックマン)」でグレッグ・ブロックマンが語った内容を検討します。この動画では、ChatGPTの現状、今後のアップデート内容、AI技術の進歩、データ解析への応用方法、さらにChatGPTの将来的な使用例などが詳細に説明されています。本文では、動画内で英語で語られた内容を日本語でわかりやすく解説していきます。

はじめに

7年前に設立されたOpenAIは、人工知能技術の可能性を押し広げ、良い方向へと導くことをミッションとしています。AI技術の進歩は、さまざまな分野に大きな影響を与えており、TEDトークでグレッグ・ブロックマンがChatGPTの様々な機能を紹介しました。

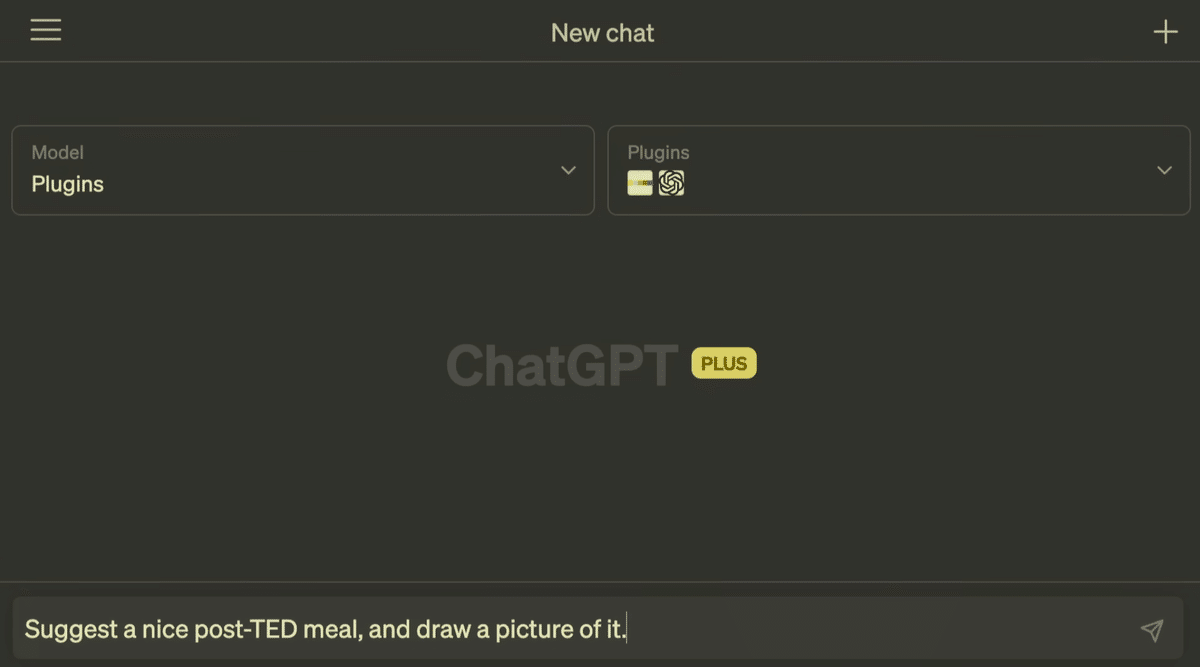

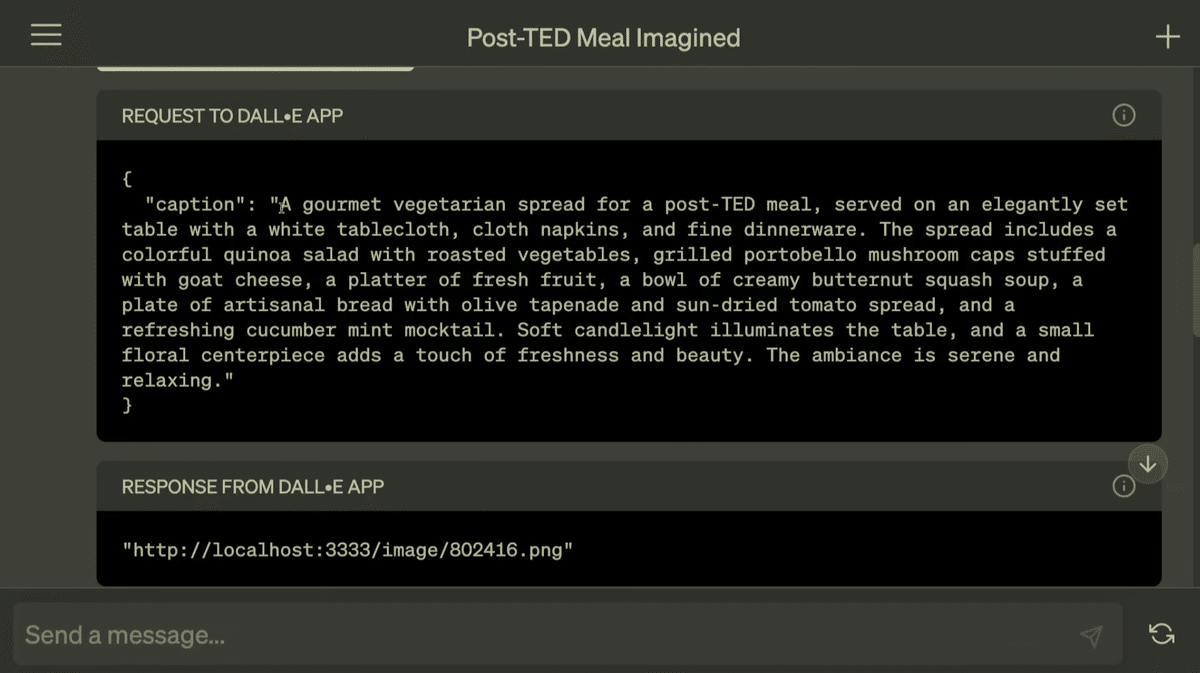

ChatGPTからDALL-Eで画像生成

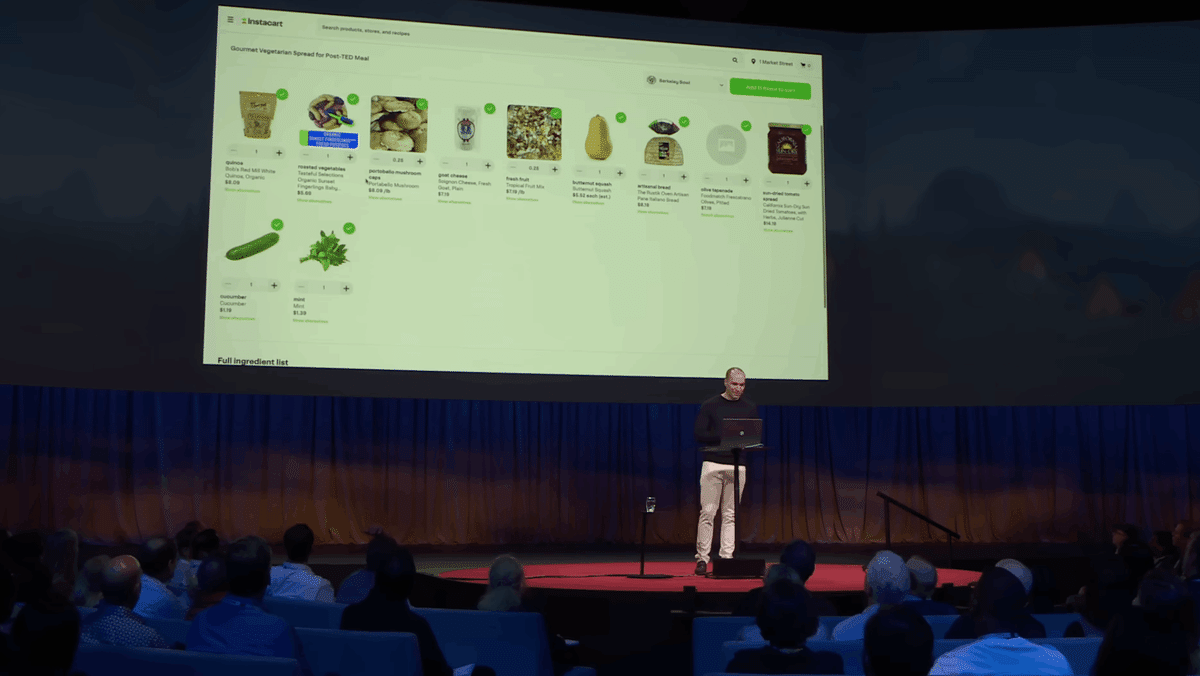

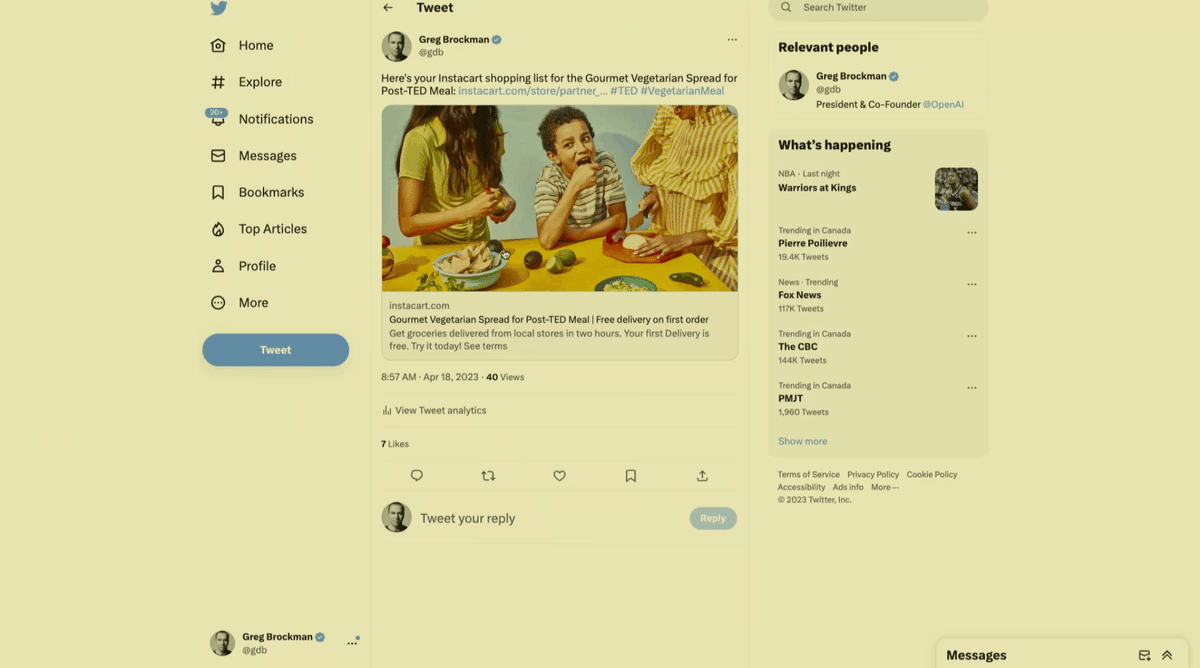

その一つの機能が、ユーザーの入力に基づいて画像を生成するDALL-Eモデルです。TED にて、グレッグ・ブロックマンは、ChatGPTからDALL-Eモデルを呼び出し、ChatGPT内で画像を生成するデモを披露しました。

メモリ機能

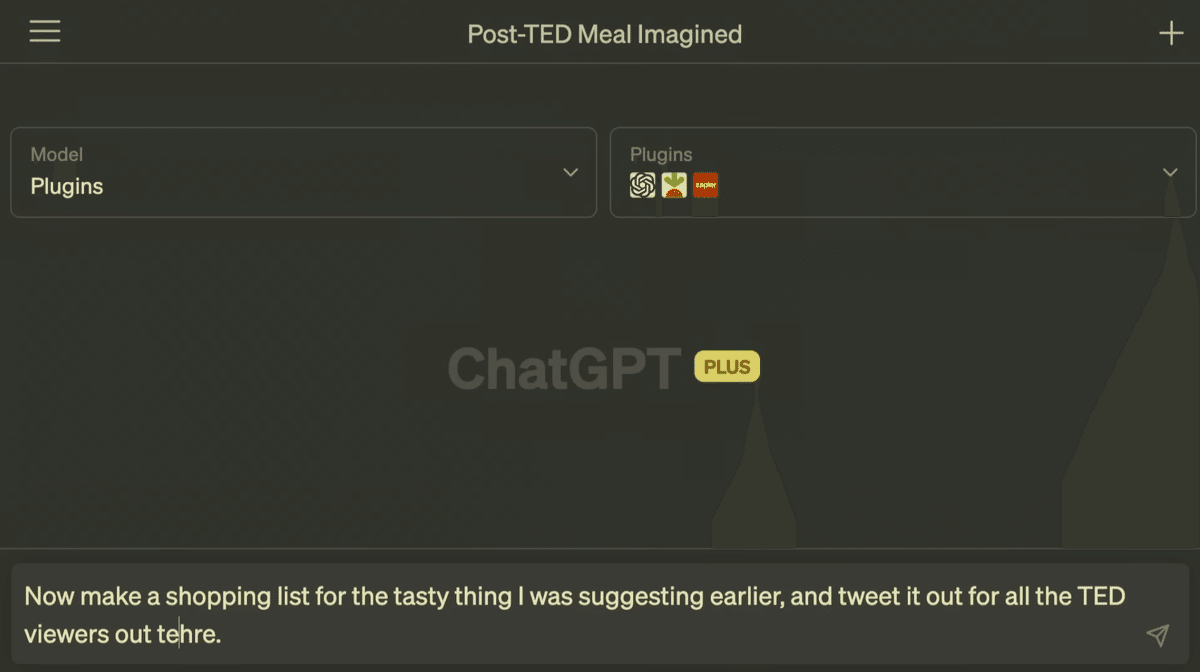

次に紹介されたのは、メモリ機能です。ChatGPTにメモリ機能を追加し、情報を記憶して呼び出せるようしているデモも披露しました。

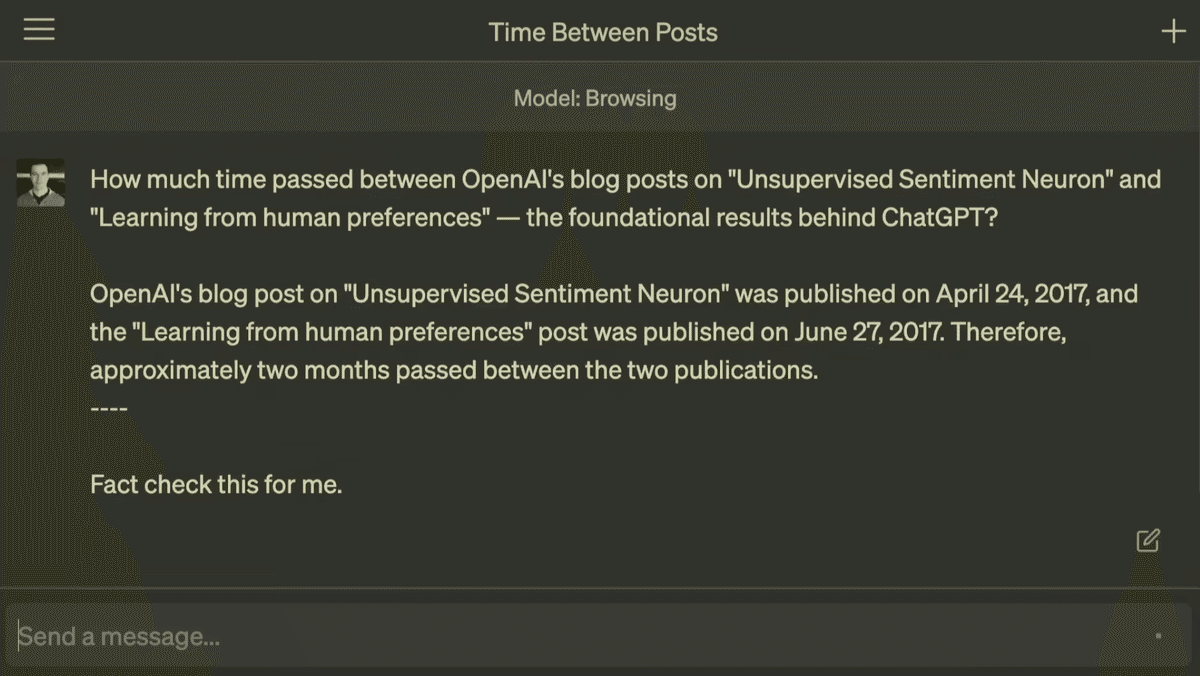

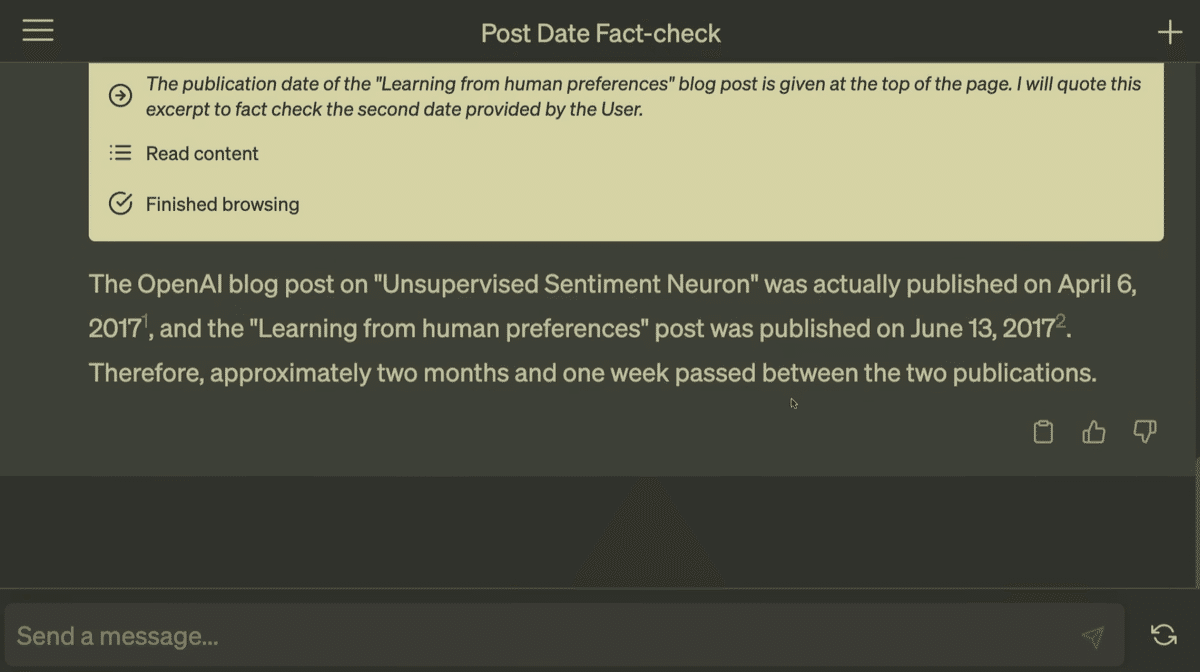

「AIによるファクトチェック」の可能性

グレッグ・ブロックマンは、AIによるファクトチェックの可能性についても説明しました。これは、ChatGPTの回答の正確性を確保する上で重要な役割を果たします。AIが情報を完璧にファクトチェックできれば、AIの学習過程において、より次のステップに進めるとグレッグは述べています。

ChatGPTの回答:OpenAIのブログ記事「Unsupervised Sentiment Neuron」は実際に2017年4月6日に公開され、また「Learning from human preferences」の記事は2017年6月13日に公開されました。したがって、2つの記事の間にはおおよそ2か月と1週間の期間が経過しています。

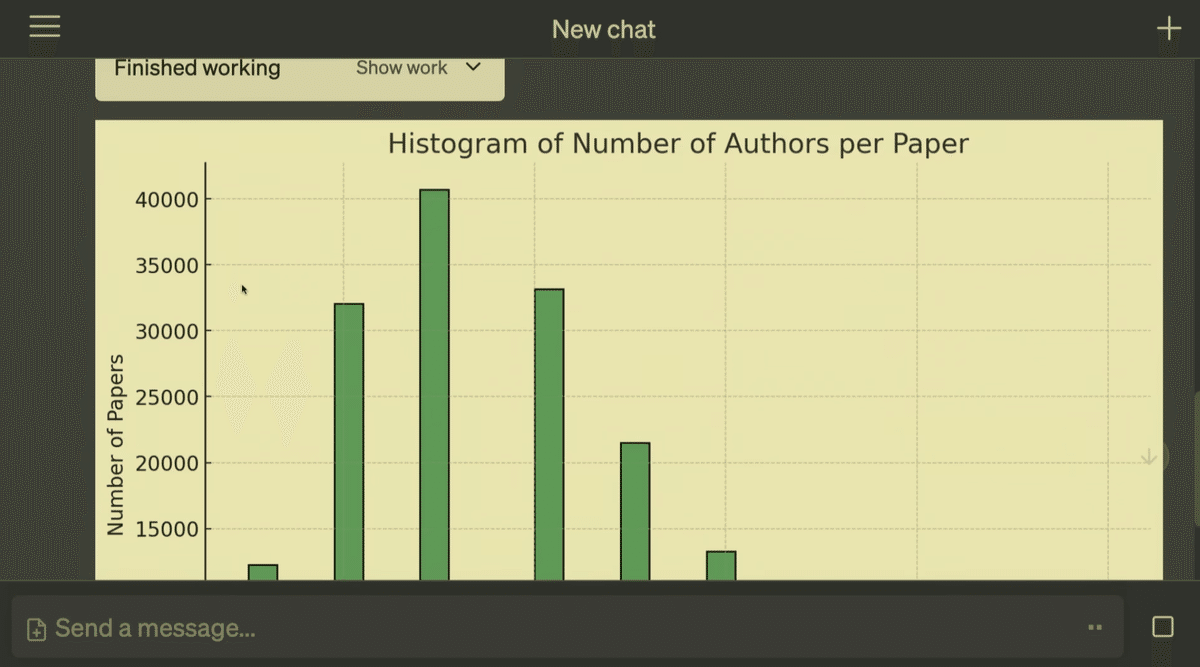

ChatGPTを使用したデータ分析

次に、Pythonインタープリタを用いてデータを分析し、データに関する分析結果をChatGPTに出力してもらう様子を公開しました。この機能では、データの分析だけでなく、ChatGPTにファイルを挿入する方法やグラフの作成も紹介されています。

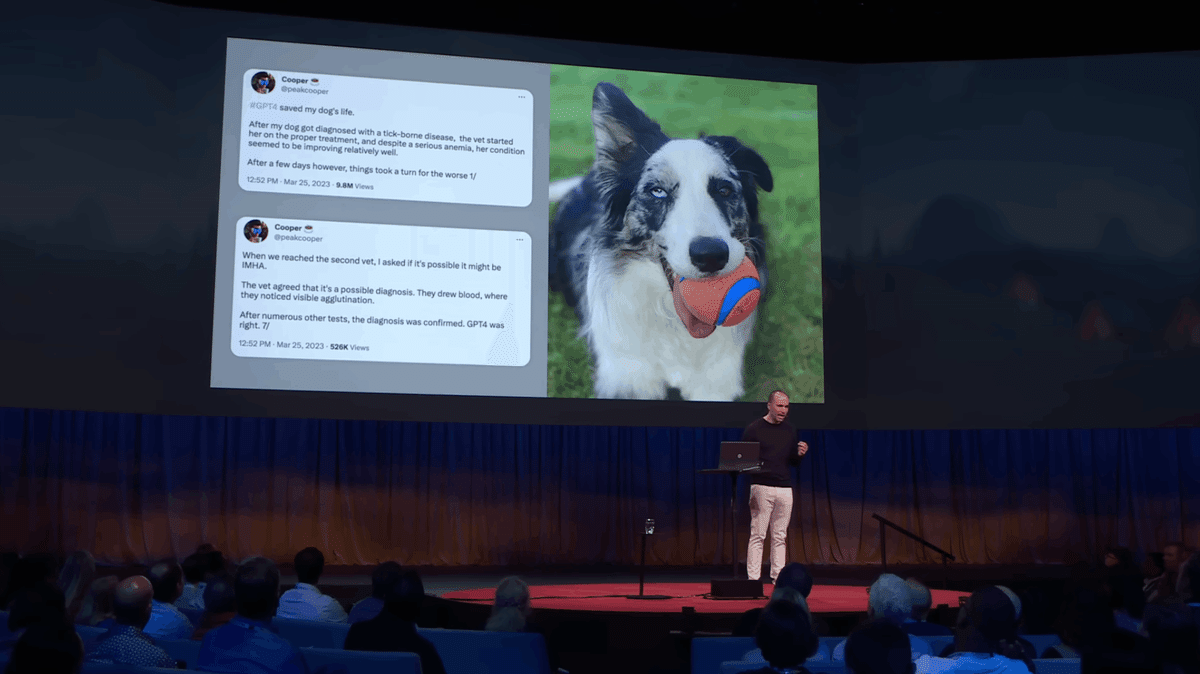

医療分野でのChatGPTの使用例

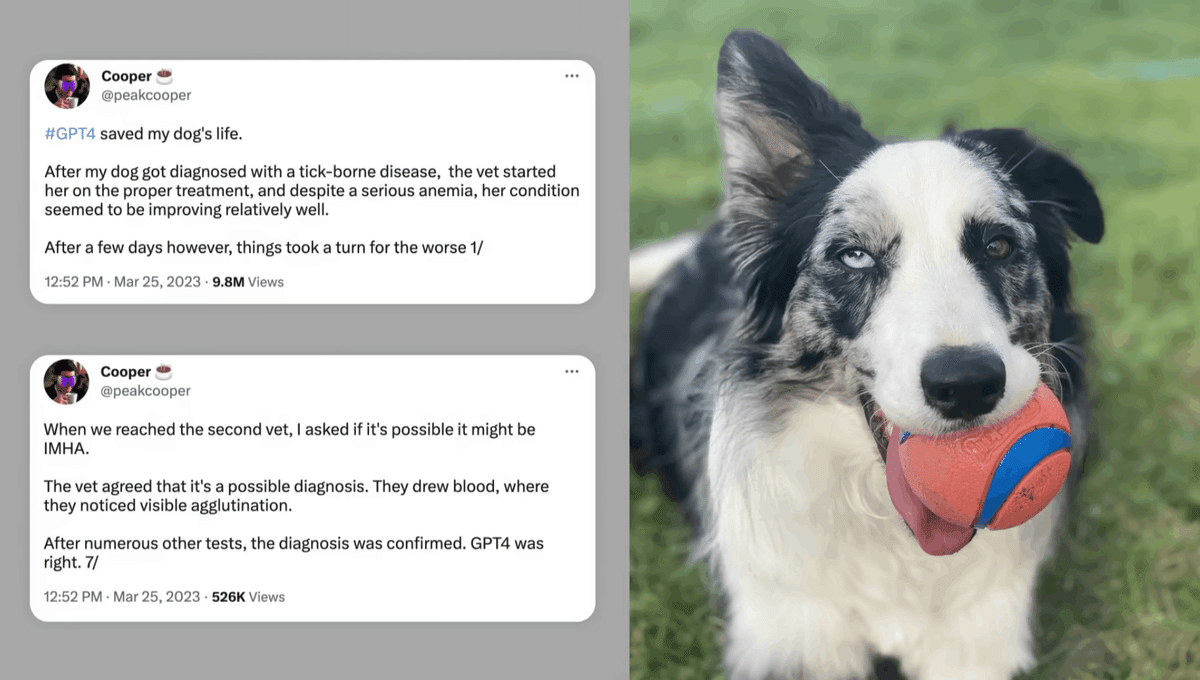

次に、ChatGPTは医療分野などのさまざまな分野でブレインストーミングのパートナーとして機能できるとグレッグ・ブロックマンは語っています。その一例として、ツイッターに投稿されたあるエピソードを紹介しました。

【2023年3月23日に「#GPT4 が私の犬の命を救った」というハッシュタグで投稿されたツイートの内容】

#GPT4 が私の犬の命を救った。

犬がダニ媒介性疾患と診断された後、獣医は適切な治療を開始し、重度の貧血にもかかわらず、状態は比較的良好に改善しているように見えました。

しかし、数日後に状況が悪化しました。1/

---------------

二番目の獣医にたどり着いたとき、IMHAではないかと尋ねました。

獣医は、それが可能性のある診断だと同意しました。彼らは血液を採取し、そこで可視的な凝集を確認しました。

さまざまな他の検査の後、診断が確定しました。GPT4は正しかった。7/

まとめ

いかがだったでしょうか?本記事では、TEDトークにて、OpenAI共同創業者である、グレッグ・ブロックマンが語った内容についてご紹介しました。OpenAIは、全人類に利益をもたらすAI技術を実現することを目指しており、その使命を果たすべく引き続き取り組んでいます。これからのAIの発展に目が離せません。本記事の内容をさらに詳しく知りたい方は、以下のリンクから動画をご覧ください。

これからも継続的に ChatGPT/AI 関連の情報について発信していきますので、フォロー (@ctgptlb)よろしくお願いします。この革命的な技術の最先端を共に体験しましょう!

【Whisper による動画のトランスクリプト】

We started OpenAI seven years ago because we felt like something really interesting was happening in AI, and we wanted to help steer it in a positive direction. It's honestly just really amazing to see how far this whole field has come since then. And it's really gratifying to hear from people like Raymond, who are using the technology we are building and others for so many wonderful things. We hear from people who are excited, we hear from people who are concerned, we hear from people who feel both those emotions at once. And honestly, that's how we feel. Above all, it feels like we're entering an historic period right now where we as a world are going to define a technology that will be so important for our society going forward. And I believe that we can manage this for good. So today I want to show you the current state of that technology and some of the underlying design principles that we hold dear. So the first thing I'm going to show you is what it's like to build a tool for an AI rather than building it for a human. So we have a new DALI model which generates images, and we are exposing it as an app for chat GPT to use on your behalf. And you can do things like ask, you know, suggest a nice post-TED meal, and draw a picture of it. (Laughter) Now, you get all of the sort of ideation and creative back and forth and taking care of the details for you, that you get out of ChatGPT, and here we go. It's not just the idea for the meal, but a very, very detailed spread. So let's see what we're going to get. But chat-gbt doesn't just generate images in this case. It doesn't just generate text, it also generates an image. And that is something that really expands the power of what it can do on your behalf in terms of carrying out your intent. And I'll point out this is all live demo, this is all generated by the AI as we speak, so I actually don't even know what we're going to see. This looks wonderful. (Applause) Now I'm getting hungry just looking at it. Now, we've extended ChatGPT with other tools too. For example, memory. You can, say, save this for later. And the interesting thing about these tools is they're very inspectable. So you get this little pop-up here that says "Use the DALI app." And by the way, this is coming to all ChatGPT users over upcoming months. And you can look under the hood and see that what it actually did was write a prompt, just like a human could. And so you sort of have this ability to inspect how the machine is using these tools, which allows us to provide feedback to them. Now it's saved for later, and let me show you what it's like to use that information and to integrate with other applications too. You can say, "Now make a shopping list for the tasty thing I was suggesting earlier." I'm going to make it a little tricky for the AI. and tweet it out for all the TED viewers out there. (Laughter) So if you do make this wonderful, wonderful meal, I definitely want to know how it tastes. But you can see that ChatGBT is selecting all these different tools without me having to tell it explicitly which ones to use in any situation. And this, I think, shows a new way of thinking about the user interface. Like, we are so used to thinking of, well, we have these apps, we click between them, we copy-paste between them. Usually, it's a great experience within an app as long as you know the menus and know all the options. Yes, I would like you to. Yes, please. Always good to be polite. (Laughter) And by having this unified language interface on top of tools, the AI is able to take away all those details from you, so you don't have to be the one who spells out every single little piece of what's supposed to happen. And as I said, this is a live demo, so sometimes the unexpected will happen to us. But let's take a look at the Instacart shopping list while we're at it. And you can see we sent a list of ingredients to Instacart. Here's everything you need. And the thing that's really interesting is that the traditional UI is still very valuable. If you look at this, you still can click through it and modify the actual quantities. That's something that I think shows that they're not going away, traditional UIs. It's just we have a new augmented way to build them. Now we have a tweet that's been drafted for our review, which is also a very important thing. We can click run, and there we are. We're the manager. We're able to inspect. We're able to change the work of the AI if we want to. After this talk, you will be able to access this yourself. There we go. Cool. So, thank you, everyone. [Applause] So we will cut back to the slides. Now, the important thing about how we build this, it's not just about building these tools. It's about teaching the AI how to use them. Like, what do we even want it to do when we ask these very high-level questions? And to do this, we use an old idea. If you go back to Alan Turing's 1950 paper on the Turing test, he says, "Look, you'll never program an answer to this. Instead, you can learn it. You could build a machine like a human child and then teach it through feedback, have a human teacher who provides rewards and punishments and does things that are either good or bad. And this is exactly how we train ChatGPT. It's a two-step process. First, we produce what Turing would have called a child machine through an unsupervised learning process. We just show it the whole world, the whole internet, and say, "Predict what comes next in text you've never seen before." And this process imbues it with all sorts of wonderful skills. For example, if you're shown a math problem, the only way to actually complete that math problem, say, what comes next, that green nine up there, is to actually solve the math problem. But we actually have to do a second step, too, which is to teach the AI what to do with those skills. And for this, we provide feedback. We have the AI try out multiple things, give us multiple suggestions, and then a human rates them, says, "This one's better than that one." And this reinforces not just the specific thing that the AI said, but very importantly, the whole process that the AI used to produce that answer. And this allows it to generalize, it allows it to teach to sort of infer your intent and apply it in scenarios that it hasn't seen before, that it hasn't received feedback. Now, sometimes the things we have to teach the AI are not what you'd expect. For example, when we first showed GPT-4 to Khan Academy, they said, "Wow, this is so great. We're going to be able to teach students wonderful things." Only one problem -- it doesn't double-check students' math. If there's some bad math in there, it will happily pretend that one plus one equals three and run with it. So we had to collect some feedback data. Sal Khan himself was very kind and offered 20 hours of his own time to provide feedback to the machine alongside our team. And over the course of a couple months, we were able to teach the AI that, hey, you really should push back on humans in this specific kind of scenario. And we've actually made lots and lots of improvements to the models this way. And when you push that thumbs down in chat GPT, that actually is kind of like sending up a bat signal to our team to say, here's an area of weakness where you should gather feedback. And so when you do that, that's one way that we really listen to our users and make sure we're building something that's more useful for everyone. Now, providing high-quality feedback is a hard thing. If you think about asking a kid to clean their room, if all you're doing is inspecting the floor, you don't know if you're just teaching them to stuff all the toys in the closet. This is a nice dolly-generated image, by the way. And the same sort of reasoning applies to AI. as we move to harder tasks, we will have to scale our ability to provide high-quality feedback. But for this, the AI itself is happy to help. It's happy to help us provide even better feedback and to scale our ability to supervise the machine as time goes on. And let me show you what I mean. For example, you can ask for a GPD 4 question like this, of how much time passed between these two foundational logs on supervised learning and learning from human feedback, and the model says, "Two months passed." But is it true? These models are not 100 percent reliable, although they're getting better every time we provide some feedback. But we can actually use the AI to fact-check, and it can actually check its own work. You can say, "Fact-check this for me." Now, in this case, I've actually given the AI a new tool. This one is a browsing tool, where the model can issue search queries and click into web pages. and actually writes out its whole chain of thought as it does it. It says, "I'm going to search for this," and it actually does the search. It then finds the publication date in the search results. It then is issuing another search query, it's going to click into the blog post. And all of this you could do, but it's a very tedious task. It's not a thing that humans really want to do. It's much more fun to be in the driver's seat, to be in this manager's position, where you can, if you want, triple-check the work and outcome citations So you can actually go and very easily verify any piece of this whole chain of reasoning, and it actually turns out two months was wrong, two months in one week. (Laughter) That was correct. (Applause) We'll cut back to the slide. And so, the thing that's so interesting to me about this whole process is that it's this many-step collaboration between a human and an AI, because a human using this fact-checking tool is doing it in order to produce data for another AI to become more useful to a human. And I think this really shows the shape of something that we should expect to be much more common in the future, where we have humans and machines very carefully and delicately designed in how they fit into a problem and how we want to solve that problem. We make sure that the humans are providing the management, the oversight, the feedback, and that the machines are operating in a way that's inspectable and trustworthy. able to actually even create even more trustworthy machines. And I think that over time, if we get this process right, we will be able to solve impossible problems. And to give you a sense of just how impossible I'm talking, I think we're going to be able to rethink almost every aspect of how we interact with computers. For example, think about spreadsheets. They've been around in some form since, we'll say, 40 years ago with Visicalc. I don't think they've really changed that much in that time. And here is a specific spreadsheet of all the AI papers on the archive for the past 30 years. There's about 167,000 of them. And you can see the data right here. But let me show you the ChatGPT take on how to analyze a data set like this. So we can give ChatGPT access to yet another tool, this one, a Python interpreter. So it's able to run code, just like a data scientist would. And so you can just literally upload a file and ask questions about it. And very helpfully, it knows the name of the file. And it's like, oh, this is CSV, comma-separated value file. I'll parse it for you. The only information here is the name of the file, the column names, like you saw, and then the actual data. And from that, it's able to infer what these columns actually mean. Like, that semantic information wasn't in there. has to put together its world knowledge of knowing that, oh yeah, archive is the site that people submit papers, and therefore that's what these things are, and that these are integer values, and so therefore it's a number of authors in the paper. Like all of that, that's work for a human to do, and AI's happy to help with it. Now I don't even know what I want to ask. So fortunately you can ask the machine, can you make some exploratory graphs? And once again, this is a super high-level instruction with lots of intent behind it, but I don't even know what I want, and the AI kind of has to infer what I might be interested in. And so it comes up with some good ideas, I think. So a histogram of the number of authors per paper, time series of papers per year, word cloud of the paper titles. All of that I think will be pretty interesting to see. And the great thing is, it can actually do it. Here we go, a nice bell curve. You see that three is kind of the most common. It's going to then write a, it's gonna make this nice plot of the papers per year. Something crazy is happening in 2023, though. Looks like we were on an exponential and it dropped off a cliff. What could be going on there? And by the way, all this is Python code you can inspect. And then we'll see the word cloud. And so you can see all these wonderful things that appear in these titles. But I'm pretty unhappy about this 2023 thing. It makes this year look really bad. Of course, the problem is that the year's not over. So I'm going to push back on the machine. So, April 13th is the cut-off date, I believe. So we'll see. This is a kind of ambitious one! So, you know, again, I feel like there was more I wanted out of the machine here. I really wanted it to, like, notice this thing. Maybe it's a little bit of an overreach for it to have sort of inferred magically that this is what I wanted. But I inject my intent. I provide this additional piece of, you know, sort of guidance. And under the hood, the AI is just writing code again. So if you want to inspect what it's doing, it's very possible. And now, it does the correct projection. (Applause) If you notice, it even updates the title. I didn't ask for that, but it knows what I want. (Laughter) Now, we'll cut back to the slide again. This slide shows a parable of how I think we -- a vision of how we may end up using this technology in the future. A person brought his very sick dog to the vet, and the veterinarian made a bad call to say, "Let's just wait and see," and the dog would not be here today had he listened. In the meanwhile, he provided the blood test, like the full medical records, to GPT-4, which said, "I am not a vet, you need to talk to a professional. Here are some hypotheses." He brought that information to a second vet, who used it to save the dog's life. Now, these systems, they're not perfect. you cannot overly rely on them. But this story, I think, shows that the human with a medical professional and with Chats GPT as a brainstorming partner was able to achieve an outcome that would not have happened otherwise. I think this is something we should all reflect on and think about as we consider how to integrate these systems into our world. And one thing I believe really deeply is that getting AI right is going to require participation from everyone. And that's for deciding how we want it to slot in, That's for setting the rules of the road for what an AI will and won't do. And if there's one thing to take away from this talk, it's that this technology just looks different, just different from anything people had anticipated. And so we all have to become literate, and that's honestly one of the reasons we released "ChatGBT." Together, I believe that we can achieve the open AI mission of ensuring that artificial general intelligence benefits all of humanity. Thank you.